Building a 15-Year CrossFit Open Database with Claude (And Auditing the Results)

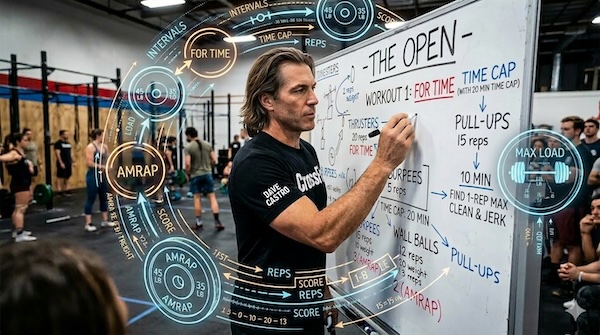

The 2026 Open just wrapped. And now that the gym is back to normal, I find myself doing what I always do: overthinking the Open workouts. Which movements keep showing up? Are AMRAPs actually more common than For Time workouts, or does it just feel that way? And is the rig ladder format — the one that ends every Open with a pull-up-to-C2B-to-muscle-up escalation — a recent programming method?

The 2026 Open just wrapped. And now that the gym is back to normal, I find myself doing what I always do: overthinking the Open workouts. Which movements keep showing up? Are AMRAPs actually more common than For Time workouts, or does it just feel that way? And is the rig ladder format — the one that ends every Open with a pull-up-to-C2B-to-muscle-up escalation — a recent programming method?

To answer my questions I would need to aggregate disparate datasets across the Crossfit Games website, or scrape wodwell and other such sites. Pulling it all together sounded time-consuming and awful. So I didn’t. Instead, I handed the job to Claude and evaluated its decisions instead.

This post is about how that went — what Claude already knew, what it had to look up, how it chose to store everything, and how it validated its dataset. The findings will follow in an upcoming post. But the process turned out to be interesting enough to deserve its own story.

The Assignment

I gave Claude a goal: build a complete, queryable database of every CrossFit Open workout from 11.1 through 26.3 — 15 years, every workout, every movement. Capture the format (AMRAP, For Time, Max Load), the time cap, and every movement in each workout. My only requirement was that it was queryable.

What I deliberately didn’t specify: how to store it, what schema to use, what tools to reach for, or how to handle gaps in its knowledge. I wanted to see how far I could get without prompting it what to do.

What Claude Already Knew

According to Claude, it’s training data covered the CrossFit Open back to 2011 — but not evenly. For 2011 through 2020, Claude had high confidence: all 50 workouts with full movement data, formats, descriptions, and time caps already in its knowledge. For 2021 and 2022, confidence dropped noticeably. Claude had workout stubs — it knew these workouts existed, had the formats and time caps — but movement data was flagged as uncertain and left blank. It knew 21.2 happened. It didn’t know what was in it. For 2023, 2024, 2025, and 2026, Claude essentially drew a blank on movements entirely. To fill those gaps, Claude prompted itself to the web: games.crossfit.com, wodwell.com, and wodprep.com. The 2021–2026 movement data was patched in from those sources.

How Claude Built the Database

The only requirement I gave Claude was that the data should be queryable. Claude chose SQLite — a proper relational database which seemed reasonable as SQLite is lightweight, local, works with Python’s standard library without any extra installs, and gives you the power of SQL out of the box.

The schema Claude designed has two tables.

workouts — one row per workout, capturing the metadata: workout ID (e.g. 11.6, 23.2b), year, format, time cap, score type, whether it has a scaled version, and whether it’s a repeat of a prior workout.

workout_movements — one row per movement per workout. According to Claude this was to make co-occurrence queries trivial: find all pairs of movements that share a workout_id. That kind of question — “how many times did thrusters and C2B appear in the same workout?” — would require ugly string parsing if movements were stored as a list. In other words the schema anticipated my questions which I thought was clever.

Claude bucketed movement types into four categories: barbell, gymnastics, conditioning, and dumbbell. According to Claude it did so for trend analysis by era — questions like “is gymnastics volume increasing over time?” and “are dumbbells slowly replacing barbells?”

On top of the two tables, Claude pre-built three SQL views:

movement_frequency— how often each movement appears, as a count and percentage of all workoutsmovement_cooccurrence— every movement pair and how many times they’ve appeared togetheryearly_movement_types— breakdown of movement categories by year

The main point here is that Claude built the table and these views anticipating the types of questions I will have. I suspect I’m not the first person to ask these types of questions and Claude’s anticipation of my questions suggests to me that others have made similar inquiries.

A Look Inside the Database

Here’s the schema:

workouts

workout_id TEXT PRIMARY KEY -- e.g. "11.6", "21.3", "23.2b"

year INTEGER

workout_num TEXT

format TEXT -- AMRAP | For Time | Max Load

time_cap_mins REAL

score_type TEXT -- rounds+reps | time | load

has_scaled INTEGER -- 0 or 1

repeat_of TEXT -- workout_id of original, if a repeat

description TEXT

notes TEXT

workout_movements

id INTEGER PRIMARY KEY AUTOINCREMENT

workout_id TEXT FOREIGN KEY → workouts

movement TEXT -- e.g. "chest-to-bar pull-up"

movement_type TEXT -- barbell | gymnastics | conditioning | dumbbell

And a snapshot of the first five rows of each:

workouts

| workout_id | year | format | time_cap | description |

|---|---|---|---|---|

| 11.1 | 2011 | AMRAP | 10 min | 30 Double Unders, 15 Power Snatches (75/55 lb) |

| 11.2 | 2011 | AMRAP | 15 min | 9 Deadlifts (155/100 lb), 12 Hand-Release Push-ups, 15 Box Jumps (24/20 in) |

| 11.3 | 2011 | AMRAP | 5 min | Squat Clean and Jerk ladder (165/110 lb) |

| 11.4 | 2011 | AMRAP | 10 min | 60 Burpees, 30 Overhead Squats (120/90 lb), 10 Ring Muscle-ups |

| 11.5 | 2011 | AMRAP | 20 min | 5 Power Cleans (145/100 lb), 10 Toes-to-bar, 15 Wall Balls (20/14 lb) |

workout_movements

| id | workout_id | movement | movement_type |

|---|---|---|---|

| 1 | 11.1 | double unders | conditioning |

| 2 | 11.1 | power snatch | barbell |

| 3 | 11.2 | deadlift | barbell |

| 4 | 11.2 | hand-release push-up | gymnastics |

| 5 | 11.2 | box jump | conditioning |

Validating AI-Sourced Data

Before running any analysis, I prompted Claude to spot-check the database against the official CrossFit Games website — one workout per two-year window, eight checks total.

Five of eight were exact matches. The three that needed fixes were all metadata issues: a movement name that was too generic (“burpee” instead of “bar-facing burpee”), a description that was slightly off, and one format label that was wrong. Since we aren’t talking about too many movements, I had it validate all movements for all years and Claude found another 15 corrections, including some completely incorrect movements. The moral of the story is to not trust Claude’s training data. Have it validate the data it is pulling. I checked all of its own corrections against the CrossFit Games website and the corrections were good. So I’m confident we have a mostly correct dataset. As we already established, there is no way I’m doing a bunch of manual work to ensure the dataset is clean for this analysis.

What’s Next

The database has 73 workouts, 47 distinct movements, and enough data to answer some questions I’ve had about Open workouts. Which movements actually dominate Open programming? What’s the most common pairing CrossFit keeps returning to? Has the style of programming meaningfully changed over 15 years — or does it just feel that way?

All of that in the next post.